Looping Gaussian Process Noise

For a talk, I wanted to use a random trajectory as a demonstration of unpredictable variables that needed to be controlled (hence, the benefits of closed-loop control as an experimental technique when studying complex systems). I thought it would look nice to have this random trajectory as a GIF in my presentation and even nicer if it looped. I realized Gaussian processes (GPs) could do the job.

Brief review of Gaussian processes

GPs model a random process as a multivariate normal distribution, where each variable corresponds to one point along an index (often one-dimensional, time). In this case we’ll refer to the entire random process of length $n$ as $X \in \mathbb{R}^n$ $$ X \sim \mathcal{N}(\mu, \Sigma) $$

The trick to GPs’ capturing the desired structure is how the covariance matrix $\Sigma$ is formed: each element $\Sigma_{ij}$ is assigned with a kernel function $k(t_i, t_j)$. The most common kernel is the squared exponential, a.k.a., the Gaussian kernel: $$ k_\text{SE}(t_i, t_j) = \sigma^2 \text{exp}\left( - \frac{(t_i - t_j)^2}{2\ell^2} \right)$$ where $\sigma$ is the standard deviation around the mean and $\ell$ determines the time scale of the noise.

This is what it looks like, along with some random samples produced with it:

import numpy as np

import matplotlib.pyplot as plt

%config InlineBackend.figure_formats = ['svg']

# the kernel

def k_SE(t_i, t_j, sigma=1, l=1):

return sigma**2 * np.exp(-(t_i - t_j)**2 / (2 * l**2))

# T will come up later as the period for looping; ignore for now

T = 15

n = 300 # number of points at which we'll sample

t = np.linspace(0, T, n) # divide signal length into n points

def get_Sigma(kernel, T):

# construct Sigma covariance matrix using the kernel

t_i, t_j = np.meshgrid(t, t)

return kernel(t_i, t_j)

def plot_kernel(kernel, T):

fig, (ax1, ax2) = plt.subplots(2, 1, sharex=True)

ax1.plot(t, kernel(t, np.zeros_like(t)))

ax1.set(title='kernel', ylabel=r'$ k(t_i-t_j) $',

xlabel='$|t_i-t_j|$')

num_samples = 3

Sigma = get_Sigma(kernel, T)

x = np.random.multivariate_normal(np.zeros_like(t), Sigma, num_samples)

ax2.plot(t, x.T)

ax2.set(title=f'{num_samples} random samples', ylabel='$x(t)$', xlabel='$t$')

fig.tight_layout()

plt.show()

plot_kernel(k_SE, T)

You’ll notice that the kernel defines the behavior of the process: high covariance between indices close to each other limits how much any two points will vary, and when that interval approaches 0 and the covariance approaches 1, two neighboring samples will converge on the same point. To get a process to loop, we need to replicate what is happening for nearby points around the edges where we want the process to wrap on itself. We do this by constructing a kernel with the squared exponential shape both for small distances and at some distance $T$—our desired period.

# the kernel

def k_looping_SE(t_i, t_j, sigma=1, l=1):

return k_SE(t_i, t_j, sigma, l) \

+ k_SE(t_i - T, t_j, sigma, l) \

+ k_SE(t_i + T, t_j, sigma, l)

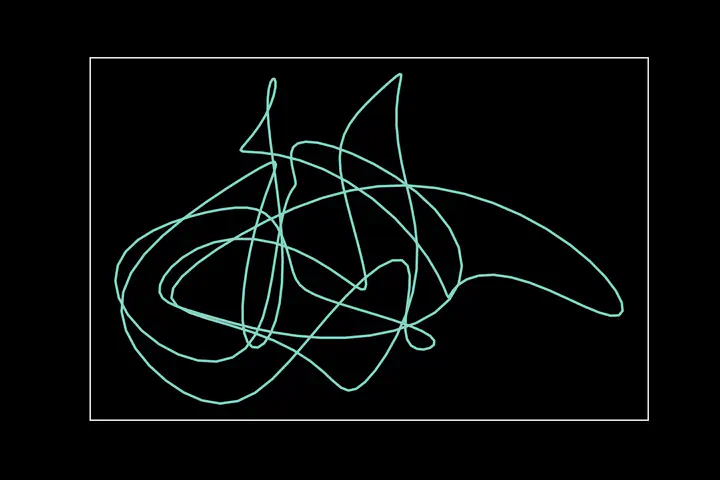

plot_kernel(k_looping_SE, T)

This time if you look at the edges of the graph you’ll see that they loop together perfectly! Time to generate some looping animations.

import matplotlib.animation as anim

from matplotlib import rc

rc('animation', html='html5', bitrate=2000)

n_anim = 3

fig, axs = plt.subplots(1, n_anim, figsize=(2*n_anim, 2))

Sigma = get_Sigma(k_looping_SE, T)

x = []

points = []

tails = []

for j in range(n_anim):

x.append(np.random.multivariate_normal(np.zeros_like(t), Sigma, 2))

full_traj = axs[j].plot(x[j][0], x[j][1], alpha=.3)[0]

color = full_traj.get_color()

points.append(axs[j].plot([], [], marker='o', c=color)[0])

tails.append(axs[j].plot([], [], '-', c=color)[0])

def animate(i):

for j in range(n_anim):

points[j].set_data(x[j][0, i], x[j][1, i])

tail1 = np.take(x[j][0], range(i-10, i+1), mode='wrap')

tail2 = np.take(x[j][1], range(i-10, i+1), mode='wrap')

tails[j].set_data(tail1, tail2)

return points + tails

ani = anim.FuncAnimation(fig, animate, range(n), interval=10, blit=True)

plt.close() # to not show static fig as well as animation

ani